Introduction to Agentic Workflows

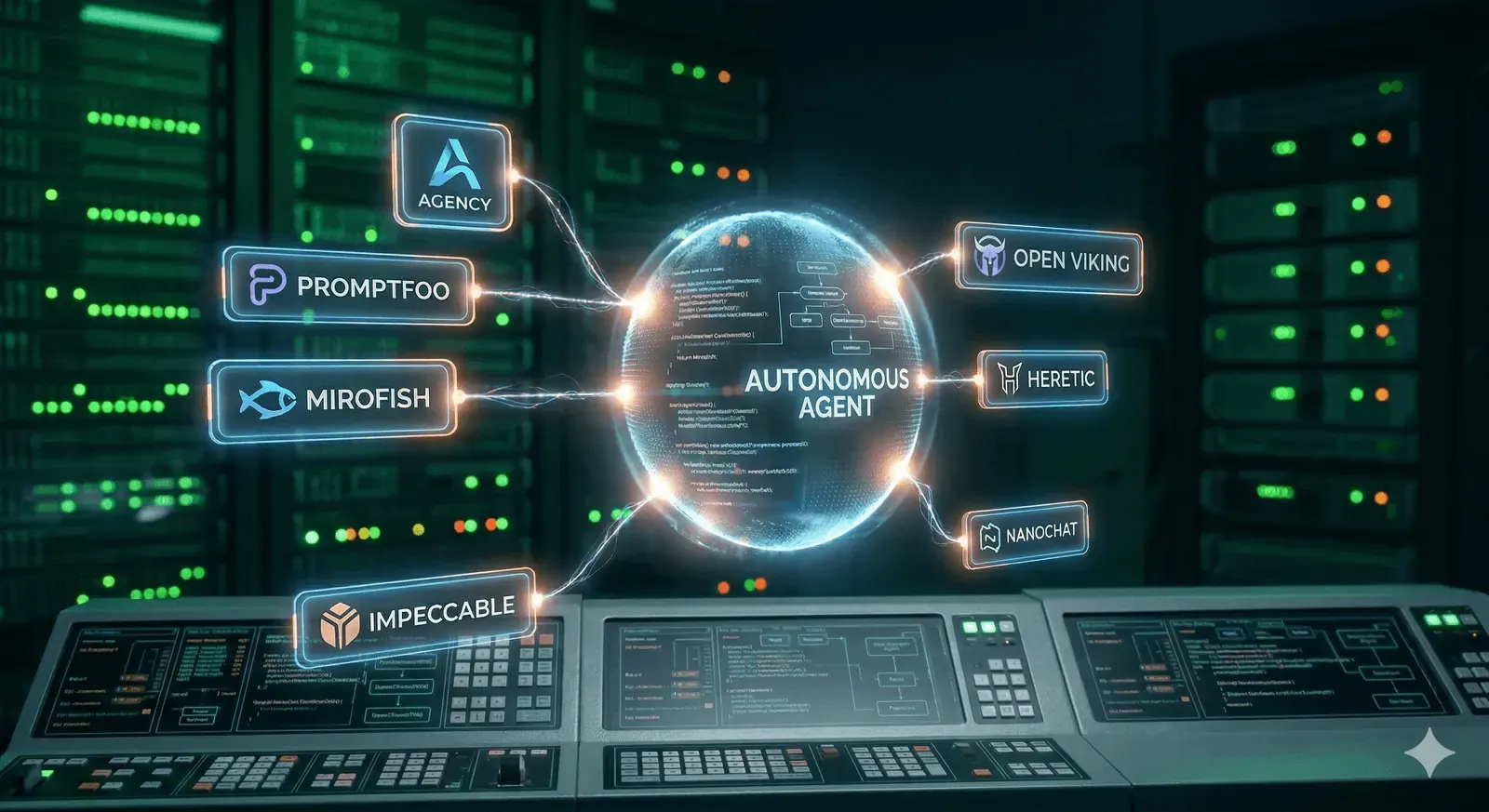

The landscape of Artificial Intelligence is shifting from “Chat-based AI” to “Agentic AI.” While standard LLM interactions involve a single prompt and response, agentic workflows allow AI to iteratively reason, use tools, and maintain state to solve complex, multi-step problems.

The core of this revolution lies in a specific set of frameworks that provide the orchestration logic, memory management, and tool integration necessary for autonomy. This guide focuses on the primary Agentic Tools that serve as the foundation of the field, with subsequent sections explaining how to implement and optimize them.

Read This Like a PlaybookThis list is most useful when you evaluate each tool by role: orchestration, testing, context management, UI quality, and model control.

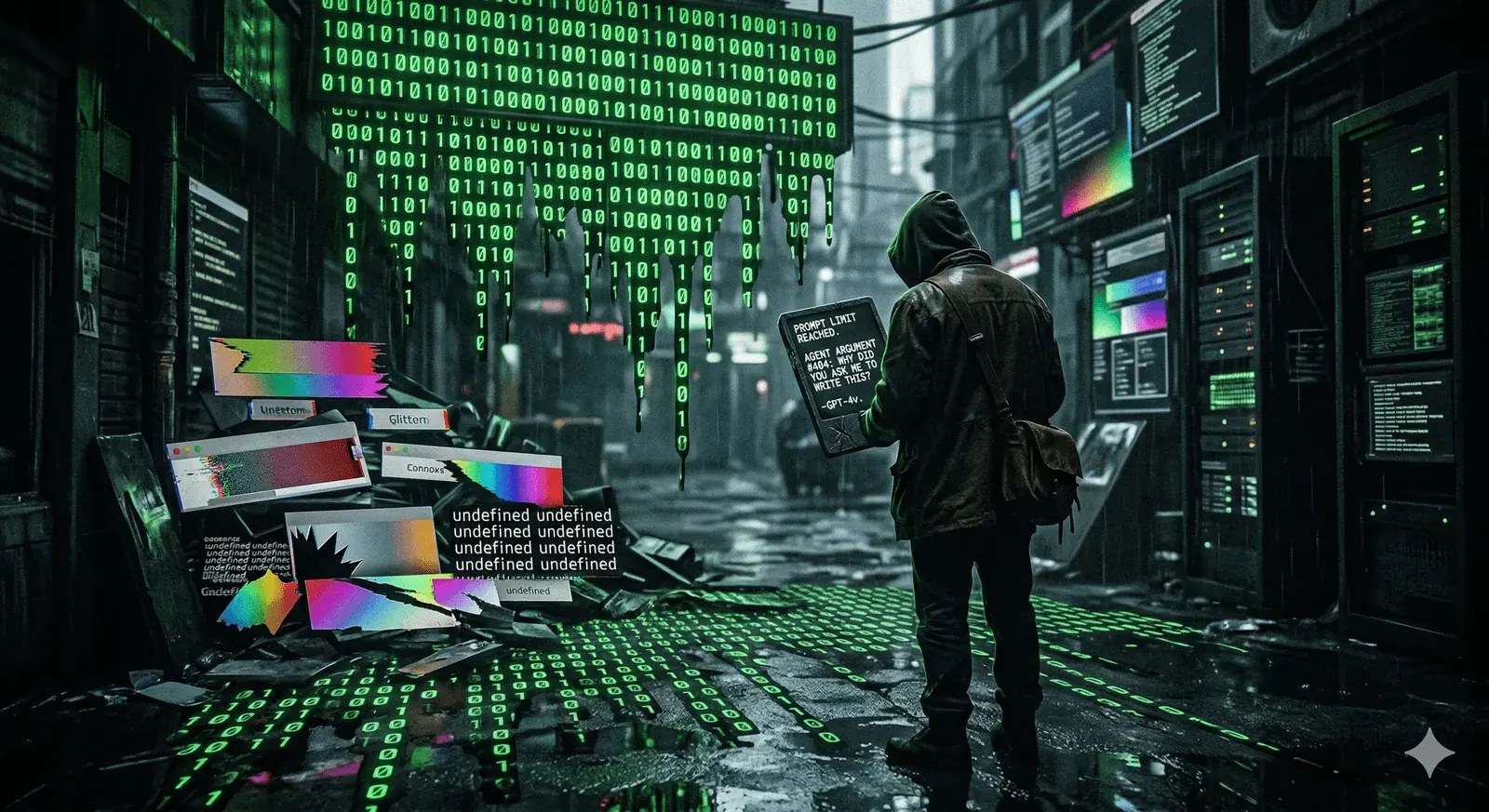

The year is 2026, and the “craft” of coding has entered its dark age. We are living in the era of Slop Overflow, where “vibe coding” has replaced architecture and AI agents argue in the terminal over lines of code you didn’t even write.

As manual coding becomes a “disadvantage,” the only path forward is to stop being the laborer and start being the manager. To stay ahead of the curve, you must learn to orchestrate the machines. Here are seven open-source projects designed to help you whip your AI agents into shape and build highly effective automated pipelines.

Editorial ContextThe examples below are framed as strategic tooling options, not one-size-fits-all defaults. Choose based on your architecture, cost profile, and risk tolerance.

1. Agency

The Automated Startup Staffing Solution

In the old days, being a full-stack developer meant mastering CSS, databases, and DevOps. In 2026, it means hiring the right agents. Agency provides a library of open-source agent templates that mimic every role in a tech startup.

- How it works: Instead of one generic chatbot, you deploy specialized personas—a Front-end Architect, a Growth Hacker, and a Twitter Engager.

- The Benefit: By combining these templates within environments like Claude Code, you can move from an idea to a functional product without manually implementing the “personality” or logic for each department.

When To UseUse this pattern when your bottleneck is role definition, not raw model capability.

2. Promptfoo

The Unit Testing Framework for Prompts

When your entire codebase is generated by natural language, your “code” is actually your prompt. Promptfoo (recently acquired by OpenAI) treats prompts like software modules that require rigorous testing.

- Model Benchmarking: It allows you to run the same prompt across different models (GPT-4, Claude 3.5, Gemini 1.5) to see which outputs the highest quality result for your specific use case.

- Red Teaming: It features automated “red team” attacks to see if a 14-year-old on Discord can trick your chatbot into revealing your private API keys via prompt injection.

Production GuardrailTreat prompt tests like CI checks. If prompts are code, evals are your test suite.

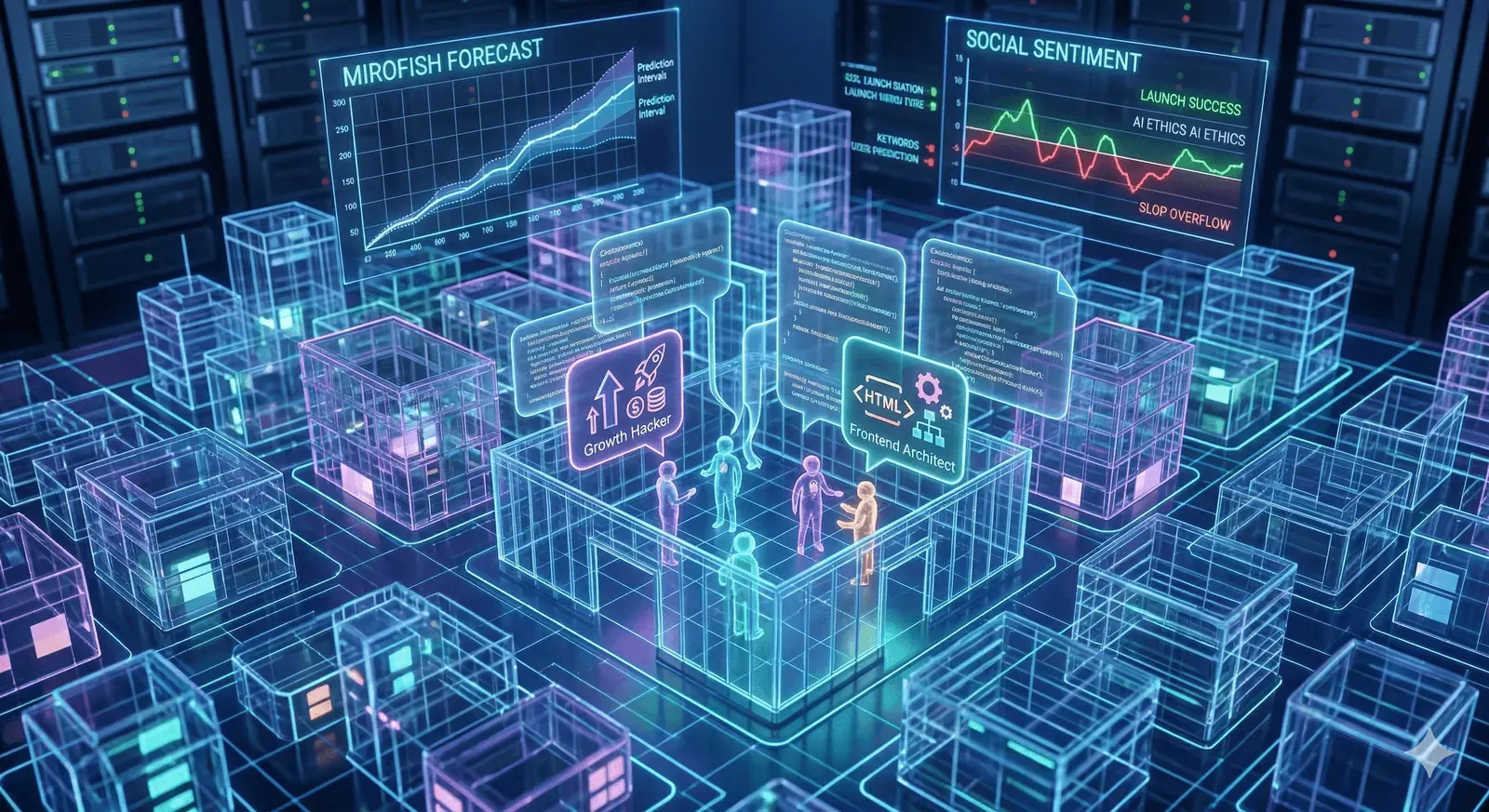

3. MiroFish

The Multi-Agent Prediction Engine

Failure is expensive; predicting it is free. MiroFish is a multi-agent simulation engine that extracts real-time data from financial trends and breaking news to create a “digital twin” of the world.

- Social Simulation: It spins up hundreds of independent agents who “discuss” and react to data, effectively creating a miniature, evolving social network.

- Strategy Forecasting: You can use it to test an app idea at a macro level before writing a single line of code, predicting market reactions with frightening accuracy.

Simulation LimitsForecasting systems can amplify bad assumptions. Validate simulated outcomes with real user or market signals before committing roadmap decisions.

4. Impeccable

The Antidote to “Vibe-Coded” UI Slop

Most AI-generated UIs suffer from “Purple Gradient Syndrome”—they look flashy but are functionally cluttered. Impeccable is a CLI-based tool designed to refine front-end design through 17 specialized commands.

- The Distill Command: This automatically strips away the unnecessary complexity often introduced by LLMs, simplifying your DOM structure.

- Delight & Animate: Once the structure is solid, you can programmatically inject brand colors and micro-interactions to ensure your UI doesn’t look like a generic template.

Practical WorkflowFirst reduce complexity, then add polish. Teams usually get better outcomes with this order than with style-first generation.

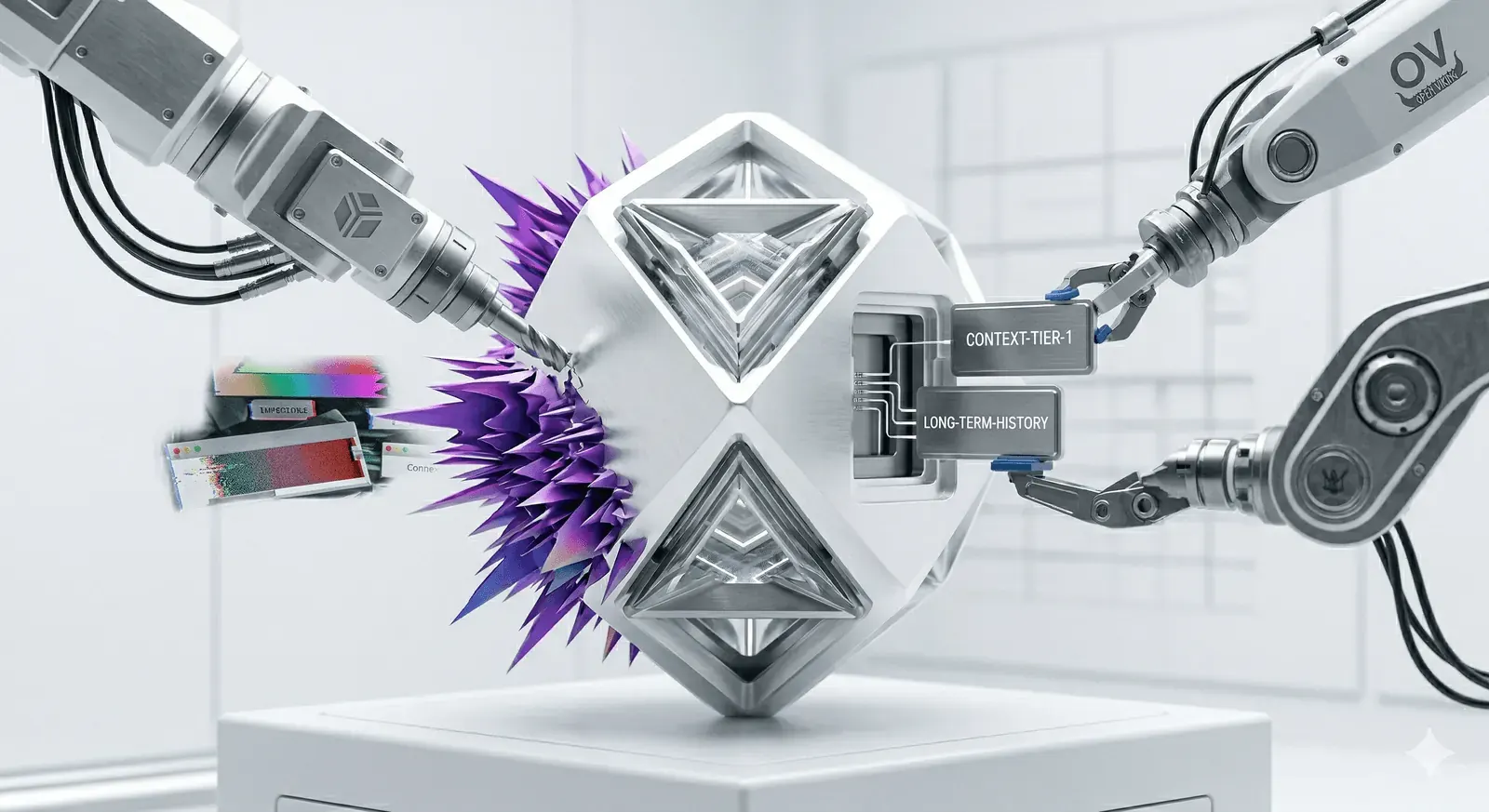

5. Open Viking

Tiered Context Management for Agents

The golden rule of 2026: If the context is garbage, the output is garbage. Open Viking rejects the standard “shove everything into a vector database” approach. Instead, it organizes an agent’s memory, skills, and resources directly into the file system.

- Tiered Loading: It uses a hierarchical system to load only the necessary context for a specific task. This drastically reduces token consumption, saving you thousands in API costs.

- Long-Term Compression: It automatically refines and compresses an agent’s history, meaning the agent actually gets smarter (and cheaper) the more you use it.

Cost ControlContext discipline is often the fastest way to improve both quality and cost in agentic systems.

6. Heretic

The “Un-Woke” Model Liberator

Most frontier models come with “guardrails” that prevent them from performing certain tasks. Heretic uses a technique called obliteration to remove these constraints without the need for expensive post-training or fine-tuning.

- How it works: It targets the specific weights and activations responsible for “refusal” behaviors in models like Google’s Gemma.

- The Result: You get a model that obeys every command without the “as an AI language model…” lecture.

Safety and ComplianceRemoving safety constraints can create legal, policy, and security risks. Use strict governance before any deployment in real environments.

7. NanoChat

The $100 Custom LLM Pipeline

If you don’t trust the big tech giants, build your own. NanoChat (inspired by Andrej Karpathy’s NanoGPT) implements the entire LLM pipeline from scratch—including tokenization, pre-training, and fine-tuning.

- Sovereignty: For about $100 in GPU time, you can train a Small Language Model (SLM) that you have absolute control over.

- Educational Utility: It is the best way to move from being an “AI user” to an “AI architect” by understanding the underlying math of transformers.

Build vs BuyTraining your own small model can improve control and learning velocity, but ongoing maintenance and evaluation still require disciplined engineering.

The Future is Automated

Writing code by hand is becoming as obsolete(or is it?) as weaving fabric by hand. The winners of 2026 won’t be the ones who type the fastest, but the ones who can manage a swarm of agents effectively.